Konec AI Chat Integration:

Turned Technical Demo into Conversational Product UX

Overview.

How do you make controlling dozens of smart home devices feel effortless?I led the end-to-end design of an AI-powered chat feature for the Konec Home app, enabling users to manage devices, create scenes, and access energy insights through natural-language interaction.

This feature was launched as a key innovation and showcased at industry events.

Skill: Product Thinking, Strategy, UX, UI, Prototyping & Interaction

Status: ✅ Launched (Integrated into Konec Home App)

Platform: iOS, Android

Timeline: Dec. 2024 to May. 2025

Role: Software Design Lead, led end-to-end AI Chat design

01

tl;dr

What I Did

I led the full UX and UI design of Konec AI Chat—from problem framing, design strategy, and user flow development to component design, motion prototyping, and final UI delivery.

While the engineering team validated technical feasibility with a generic UI demo, I transformed that prototype into a system-consistent, emotionally engaging, and fully integrated user experience.

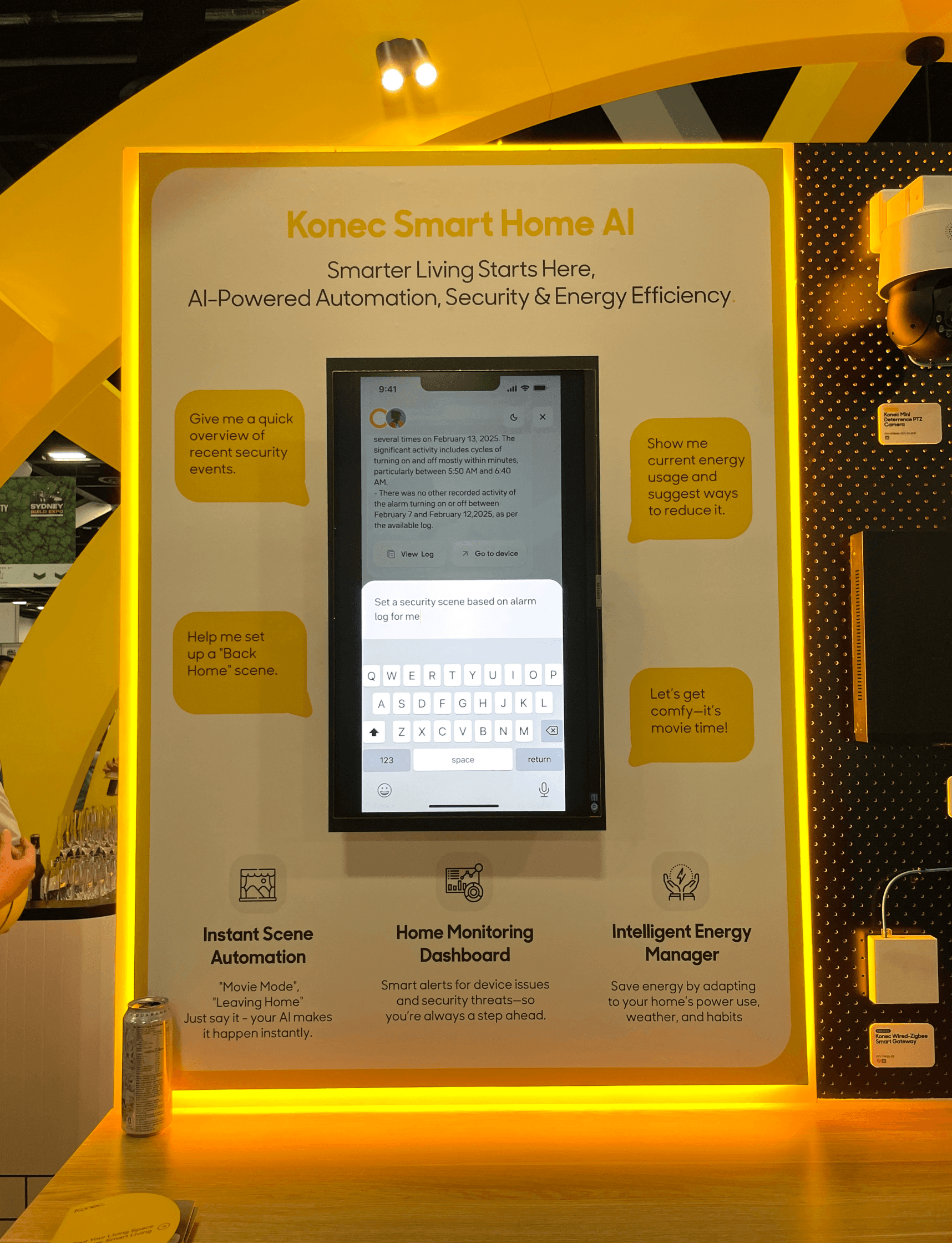

The final prototype were used at the 2025 Sydney Build Expo to present Konec AI to industry attendees, highlighting the assistant’s role in simplifying smart home control.

✦ Results & Impact

• Successfully launched to all users as part of the Konec Home app

• Helped reduce task friction and boosted feature discoverability through conversational guidance, and enhanced user engagement and energy tracking feel more approachable

• At the Sydney Build Expo 2025, this feature attracted strong interest from both technical and consumer visitors

• Received positive user feedback citing “natural interaction flow” and “surprising intelligence” as standout qualities

Project Context

The Konec Home app supports a wide range of devices across lighting, security, energy, and automation. As more functionality was added, navigation and task complexity increased, especially for non-technical users managing multiple rooms and device types.

To address this, we introduced a conversational assistant—powered by AI—to unify control, insight, and configuration into a single, natural interface.

This feature is built upon the Konec Home system—Case 2 – Smart Home UX.

To bring this AI-powered assistant to life, our engineering team built an early demo using generic components to validate technical feasibility. That’s where I stepped in.

02

Starting from Engineering Prototype.

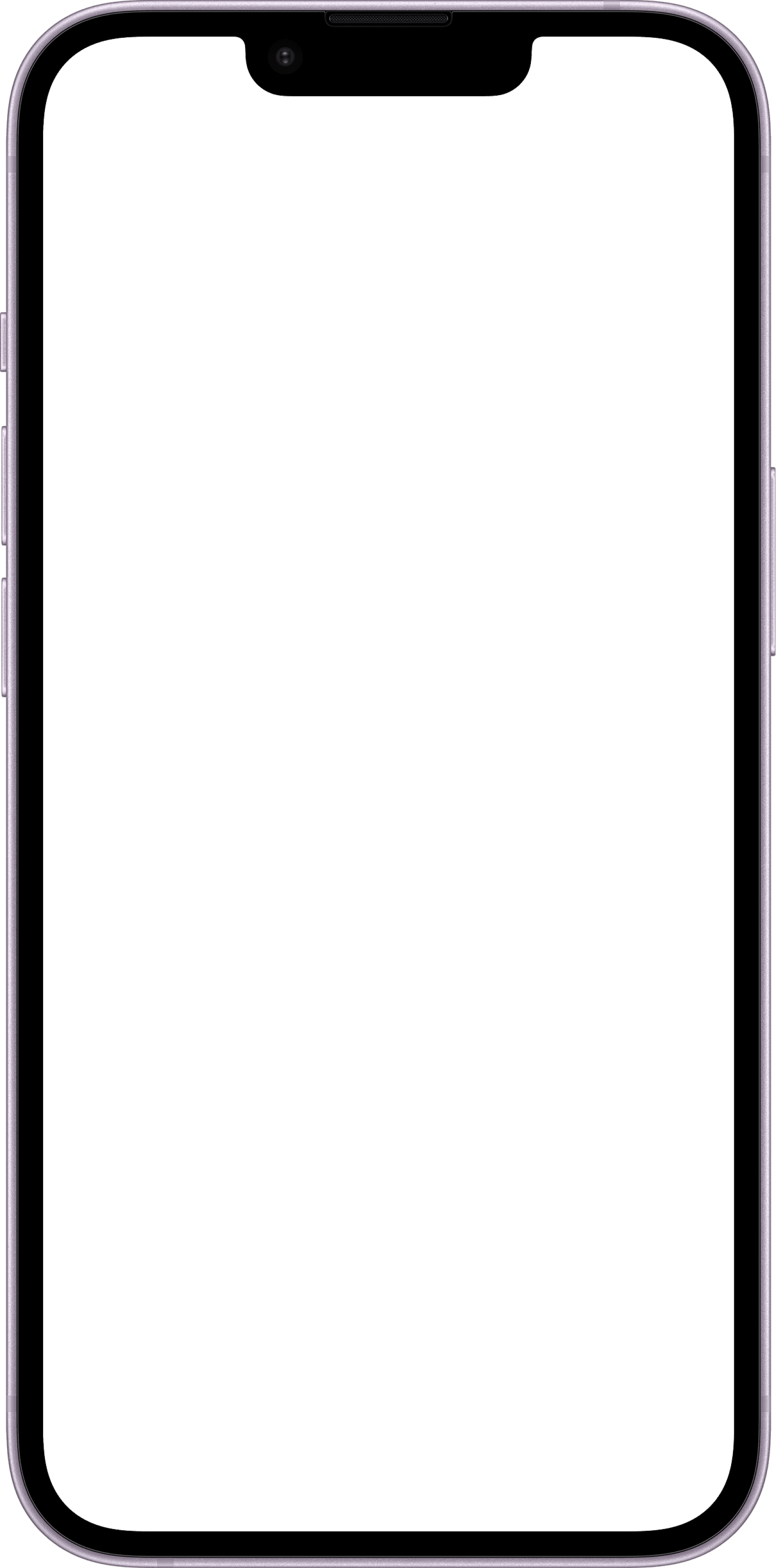

The engineering team initially built a working prototype using the open-source Flutter package ChatView, which provided basic chat UI components for message display and input handling.

This demo validated backend AI capabilities but lacked the UX clarity, feedback behaviour, and branded visual style needed for a real-world product experience.

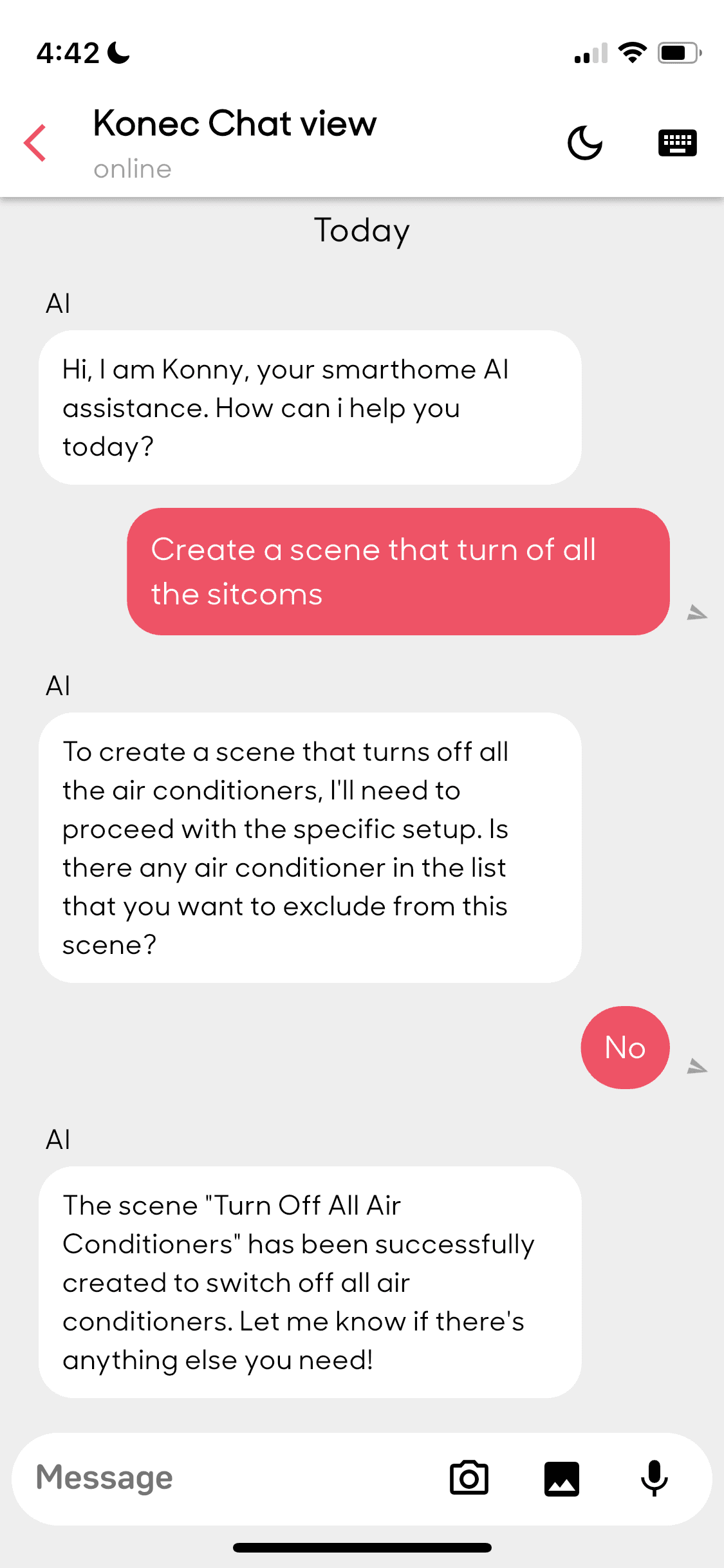

Below is a snapshot of the early demo:

Engineering demo screenshot

No assistant onboarding

Header doesn't reflect the AI interactivity

Both user and AI used the same neutral bubble design

No system feedback or input suggestions

Static message blocks with no hierarchy

Generic replies with no actionable UI or jump points

Problem Brief –

What the Demo Could Do

(and Couldn't)

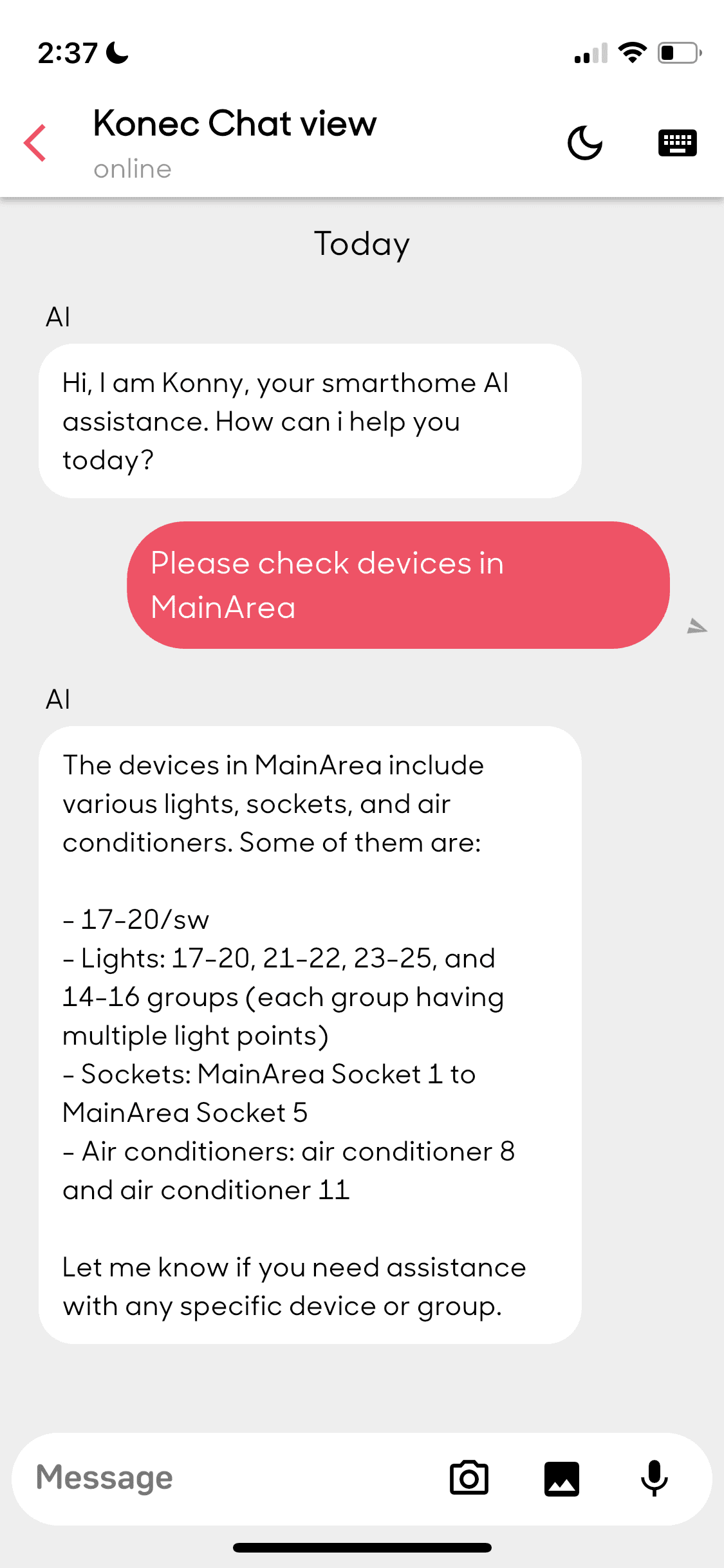

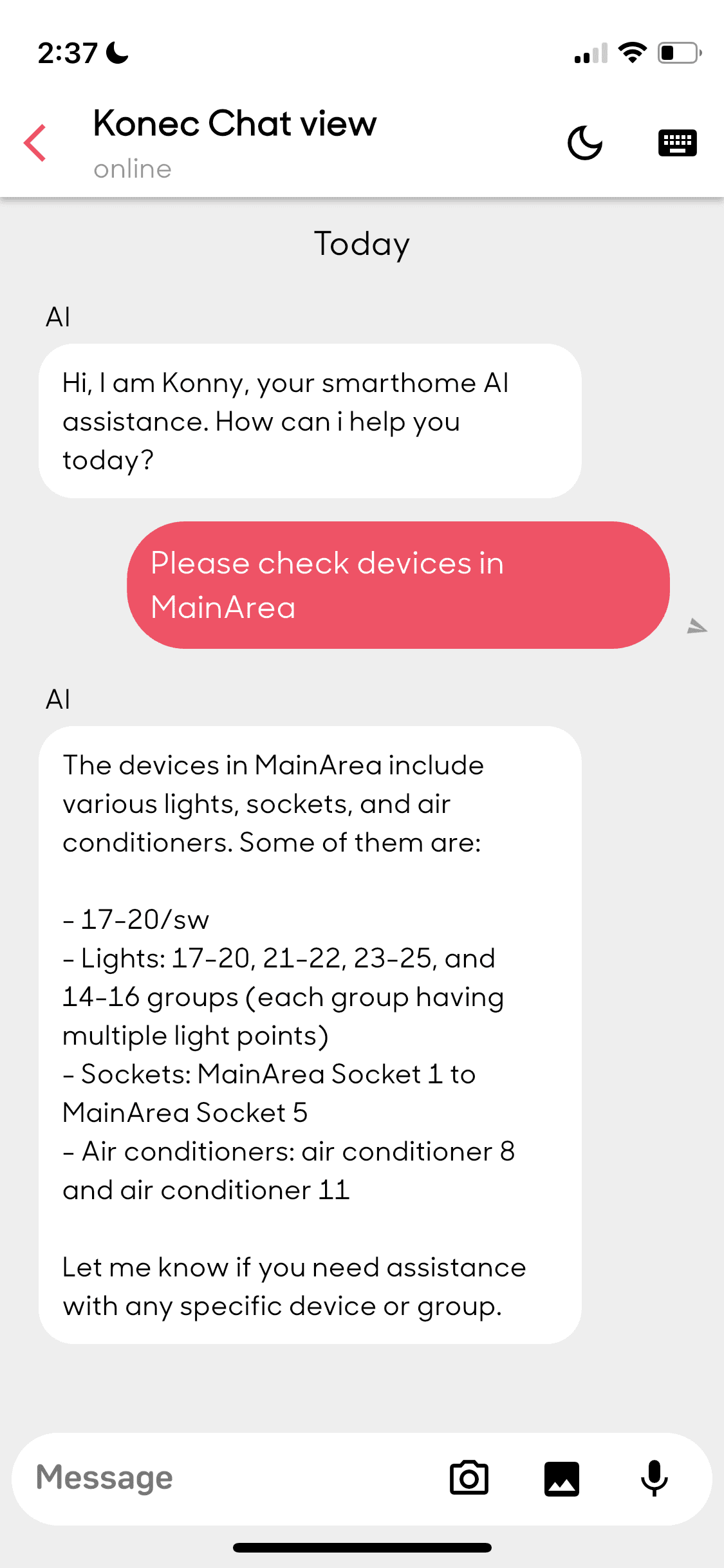

The demo was able to execute basic commands like checking device status, summarising alarm logs, and creating smart home scenes. However, these tasks were presented in a raw, unstructured format.

These limitations formed the basis for my design strategy — emotional engagement, clarity, and modular interactivity.

🟢 AI could return a device list

🔴 Formatting was dense and hard to scan

🟢 Data shown was accurate

🔴 lacked emphasis & structure & relevance

🟢 Scene creation was possible

🔴 interaction felt robotic and ambiguous

So…

How Might We turn a cold, function-heavy demo into an AI assistant that feels warm, helpful, and intuitive?

Design Direction &

Principles

03

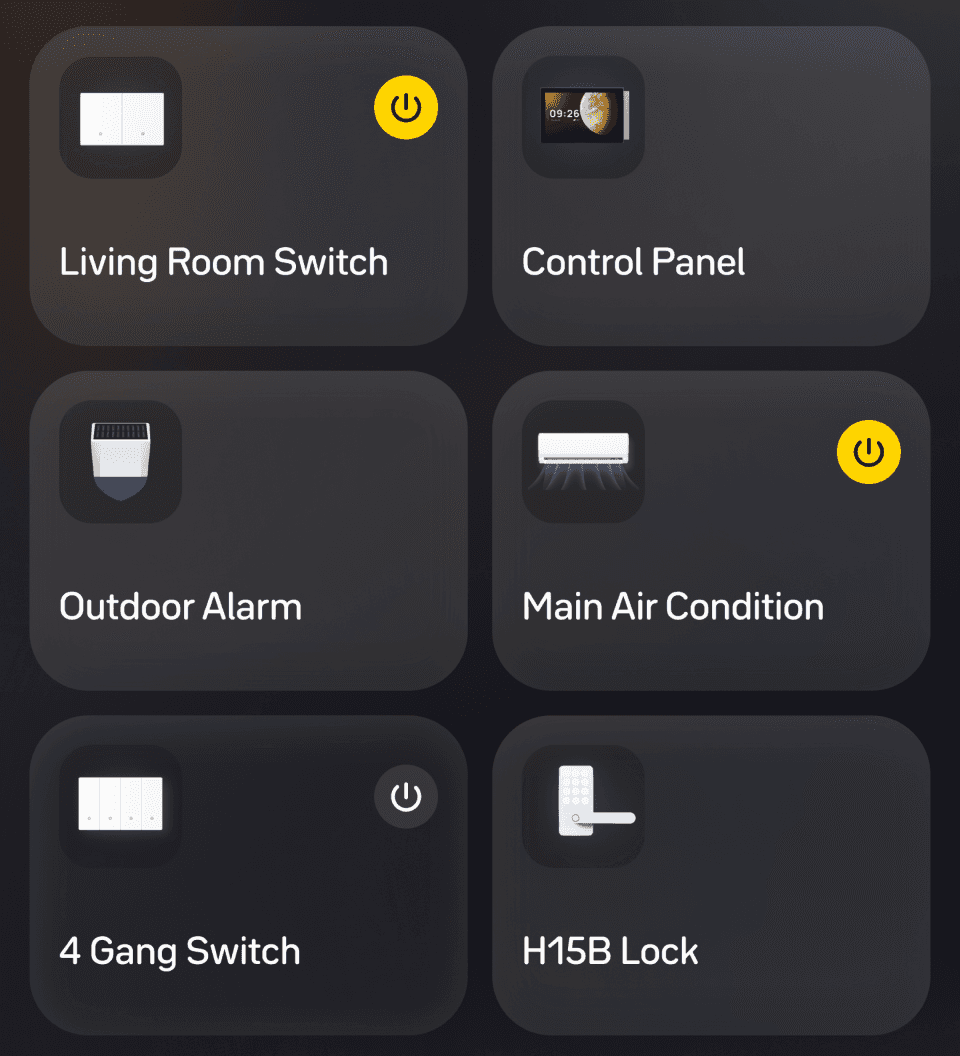

Designing AI Chat meant working within a mature app ecosystem—with a defined design system, strong visual identity, and consistent UI patterns.

But AI Chat introduced a new interaction model—one that needed to feel lightweight, contextual, and human.

Rather than building a new visual language, I reinterpreted what existed. Device tiles, scene cards, and icons were abstracted into modular reply components that felt consistent—but behaved differently.

And to give AI Chat a clearer identity, I deliberately shifted it to light mode by default. This visual contrast signalled its role as an intelligent layer—separate from device control, yet deeply integrated with it.

Designing Within and Beyond the System

1.

Example of how I abstracted system components into modular reply logic:

✦ This informed three principles:

• Reuse what the system already teaches

• Reframe components when context changes

• Extend patterns to support new conversational behaviours

Device tile on Homepage

Device icon + Device name + toggle

Modular reinterpretation

Repurposed device tile in reply block

System tiles → abstracted → Modular reply blocks

Device Name

shortcut

Device Name

shortcut

Device Name

shortcut

Device Name

shortcut

The devices in Bedroom include 1 light group, 1 alarm and 1 air conditioner:

Let me know if you need assistance with any specific device or group.

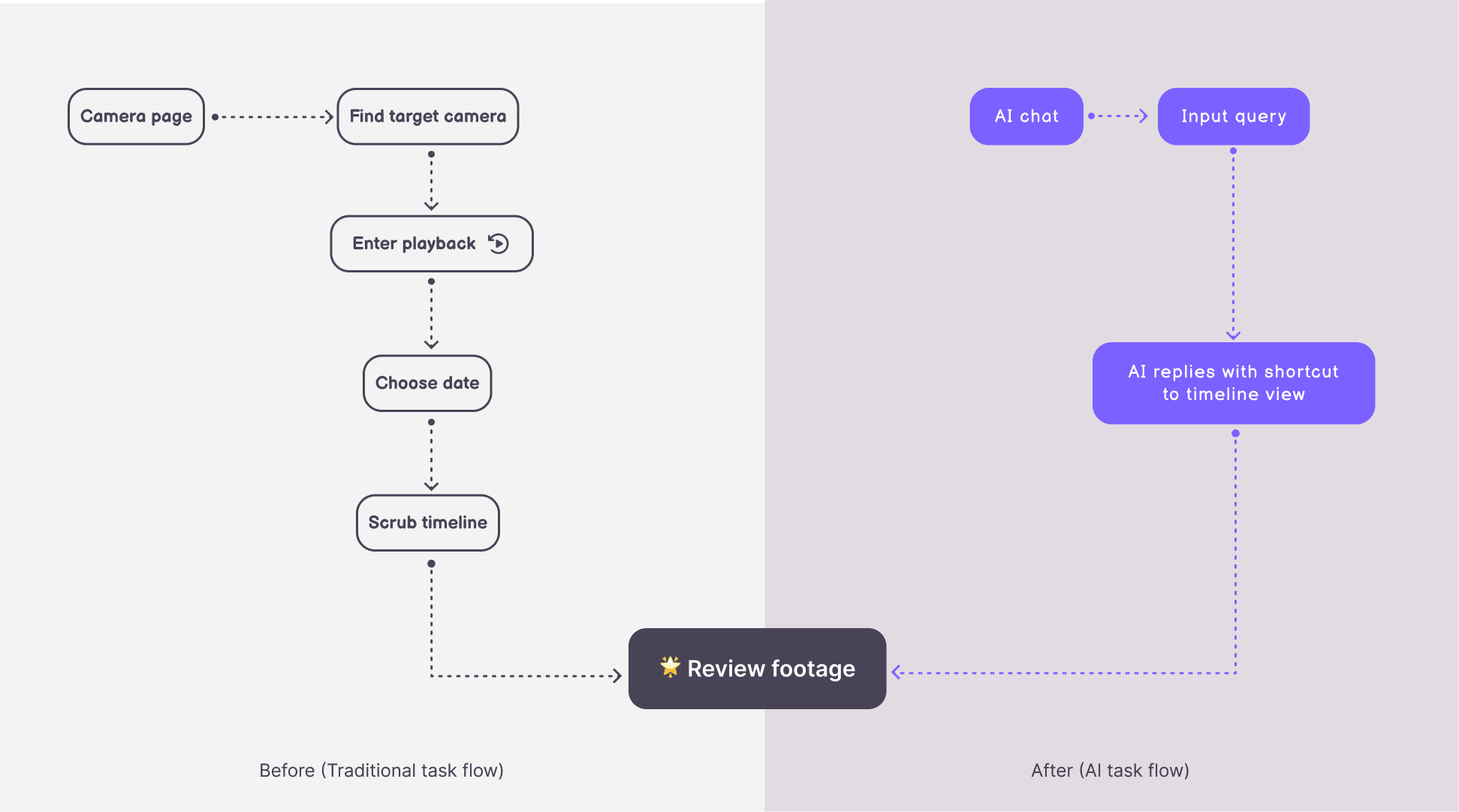

Where users previously needed multiple taps to reach a function, AI replies now offered direct shortcuts—to devices, logs, automations, or playback.

Interaction Friction = Design Opportunity

2.

✦ Reduced from 6 steps → 1 tap

Example of playback task flow

Strategy set the direction—

but the real work began in the design.

Here’s how I led the entire process,

from concepts to shipped product.

04

Design Process:

From Demo to Humanised System

Rather than following a strict visual iteration sequence,I structured my design evolution around 3 key goals:

• Humanising the AI experience

• Aligning it with real user needs through flexible response models

• Integrating it seamlessly into the app’s system behaviour and visual identity.

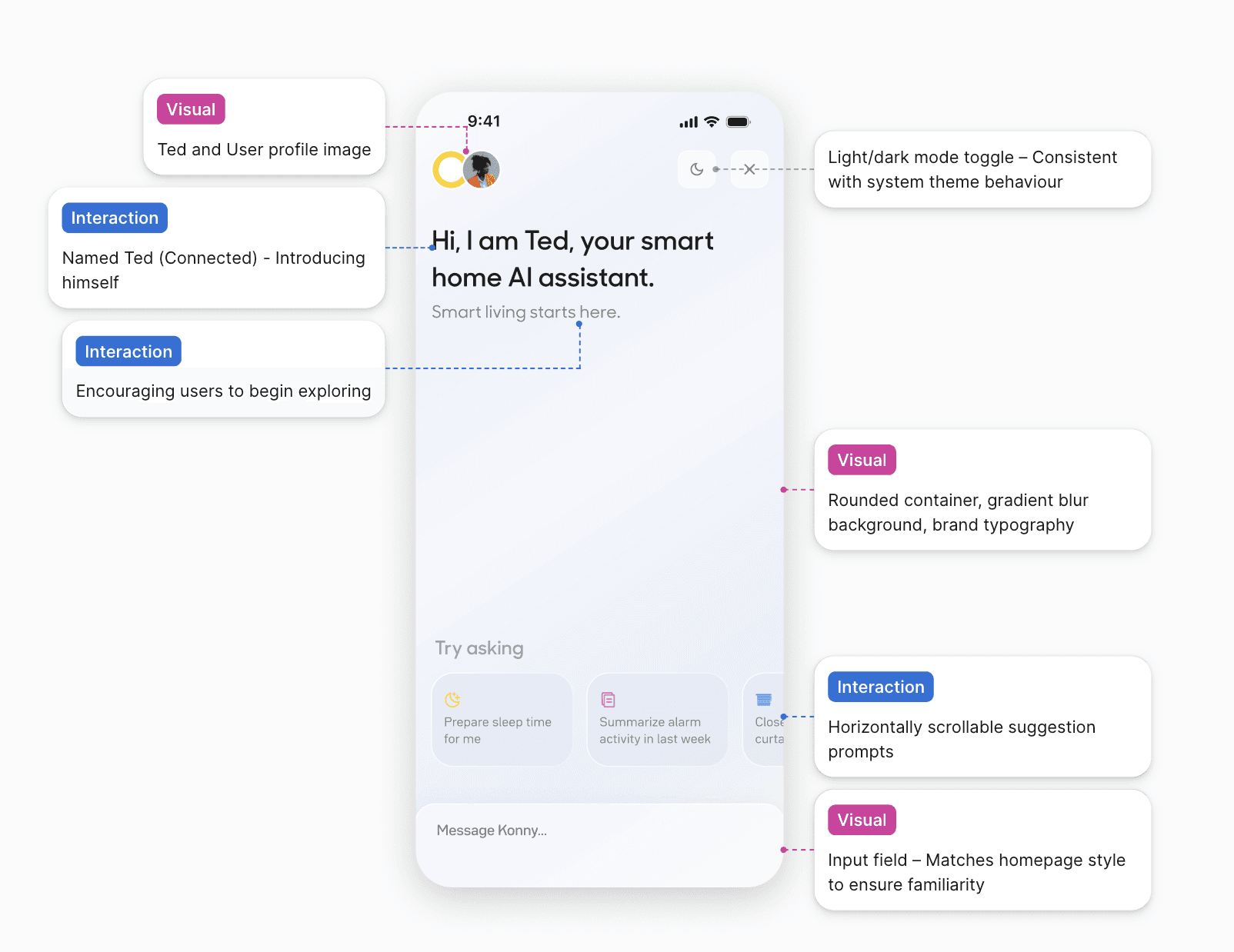

Giving the AI a Personality

1.

To define AI’s personality, I explored different tones—friendly, helpful, and calm—then aligned visual treatments and microcopy to reinforce those traits across animations, messages, and entry points.

Final Onboarding Screen

Wireframe

Try asking

Input Field

Hi, I’m your AI Assistant...

(friendly tone)

Prepared question 2

💬

Prepared question 1

💬

Set a relaxing scene on Saturday

Set morning routine in weekdays

9:41

Brand Logo & User profile

Use of intentional whitespace to enhance focus and calm pacing

Horizontally scrollable

Dark mode toggle

(for accessibility & system alignment)

After a user sends a message, Ted enters a brief "thinking" state

Ted's welcome animation and suggestion prompts on launch

Feedback interaction

— Ted is thinking

▷ Interaction Demo

• Named the assistant Ted (from "Connected") to build identity

• Ted introduces himself, followed by a gentle call to action•

• Typing effect and profile icons convey presence and warmth

• “Ted thinking” state animation to show system feedback

• Suggestion prompts help users take the first step

• UI elements are aligned with the app system

✦ Interaction and visual highlights:

By designing Ted as a conversational presence instead of a passive system, we helped users feel more comfortable, curious, and in control.

Building a Responsive Reply Model

2.

Designing Ted’s reply structure was critical to delivering real smart home value. Rather than generic text messages, I created a modular UI reply system—where each response aligned with a specific user intent and app function.

Ted-generated device module combining real-time status, quick toggles, and deep links for efficient control.

Type 1: Device Card

Ted

The devices in Bedroom include 1 light group, 1 alarm and 1 air conditioner:

Let me know if you need assistance with any specific device or group.

Bedroom Light

VRV Air conditioner 2

Alarm

Offline

Please check all devices in bedroom

Show live state (Open/Close/offline)

Quick toggle

Text information(quick overview) + Device Tile (Icon, Name, Live state,Toggle,Jump links)

Jump to device control screen

Below is a breakdown of core Ted reply types—each reply guides users toward meaningful action, not just information.

Modular Reply Types (Visual Overview)

Check Playback

Here’s the recording from last Friday. Tap to view.

Ted

Find the security camera playback from last Friday

Direct access to video playback timeline

One-tap access to full event log and related device page

Summarize the log of alarm last week.

Ted

In the last seven days, the alarm activity for the Konec Siren Alarm shows multiple instances of being turned on and off. Here is a brief summary:

- The alarm was activated and deactivated several times on February 13, 2025. The significant activity includes cycles of turning on and off mostly within minutes, particularly between 5:50 AM and 6:40 AM.

- There was no other recorded activity of the alarm turning on or off between February 7 and February 12,2025, as per the available log.

View Log

Go to device

Text information

+ direct access

Ted-generated replies that pair contextual summaries with direct actions— combining natural-language insights with one-tap access to logs, devices, or recorded footage.

Type 2: Shortcut Module

Ted

Edit Scene

Security Scene - Alarm Trigger

I've successfully set up a security automation scene "Security Scene - Alarm Triggered by Presence". This scene will trigger the Alarm whenever human presence is detected by any of the human sensors.

Set a security scene based on alarm log for me

Ted

Edit Scene

All Switch Off

I've successfully set up a Tap-to-Run scene "All Switch Off". This will make it easy for you to quickly switch off all lights with a single tap.

Let me know if there’s is anything else you need!

I wanna turn off all lights.

Shortcut to edit scene config

Tap to enable/disable suggested scene

Text information + Scene tile + Direct access

Tap to run suggested scene

The unique ICONS represent the scenes created by Ted

Ted-generated scene module based on user intent, with contextual icon, one-tap activation, and quick access to edit

Type 3: Scene Tile + Shortcut Module

Modular replies turned AI from a passive responder into an active guide—reducing steps, aligning with real goals, and encouraging deeper app usage.

▷ In-Context Interaction

Now that Ted can think, speak, and assist—

how do we make sure he belongs in the system?

System Alignment &

End-to-End UX Completion

3.

Once interaction logic and reply models were solidified, I ensured the design aligned fully with the broader app experience:

Dark/Light Mode

Bi-direction

• Matched visual style with Konec app’s light and dark modes

• Designed bidirectional navigation between chat and device/scene/energy pages

• Built full user flow: AI onboarding → message → reply → shortcut action → return to AI

• Validated alignment with motion demos and flow walkthroughs (both in Figma and live demo)

Ensured consistency, familiarity, and smooth experience across the app—bridging new AI behaviors with existing mental models.

✦ Design highlights & User Value:

Ted currently supports key smart home tasks through natural-language input:

• Device control

• Device status checks

• Security log queries

• Tap-to-Run and Automation setup

• Troubleshooting help

These were prioritised based on user needs and integrated with the app’s control and scene systems.

Looking ahead, I’m actively involved in defining future enhancements to expand Ted’s intelligence and flexibility.

I collaborated with the PM team to define the future voice interaction scope, exploring wake-word activation and cross-platform triggers. We also planned for Google Nest integration via Home Hub—extending Ted’s reach beyond the app and into the ambient home environment.

These directions will deepen Ted’s role as a proactive, multi-modal assistant—moving from reactive command handling to ambient smart living support.

AI Capabilities & Strategic Vision

05

Automating tasks (In future plan)

Reflection

06

This project expanded my expertise beyond traditional UI/UX into designing intelligent, conversation-driven experiences. I learned how to translate abstract AI capabilities into user-centred interaction flows, and how to shape personality, context awareness, and responsiveness into something truly usable.

It also strengthened my ability to collaborate across engineering and product teams—turning a technical prototype into a polished, system-integrated solution. Most importantly, it taught me how to design for flexibility: balancing structure with openness, predictability with intelligence.

This experience deepened my confidence as a senior-level designer who can lead not just execution, but also strategic definition for emerging product domains.

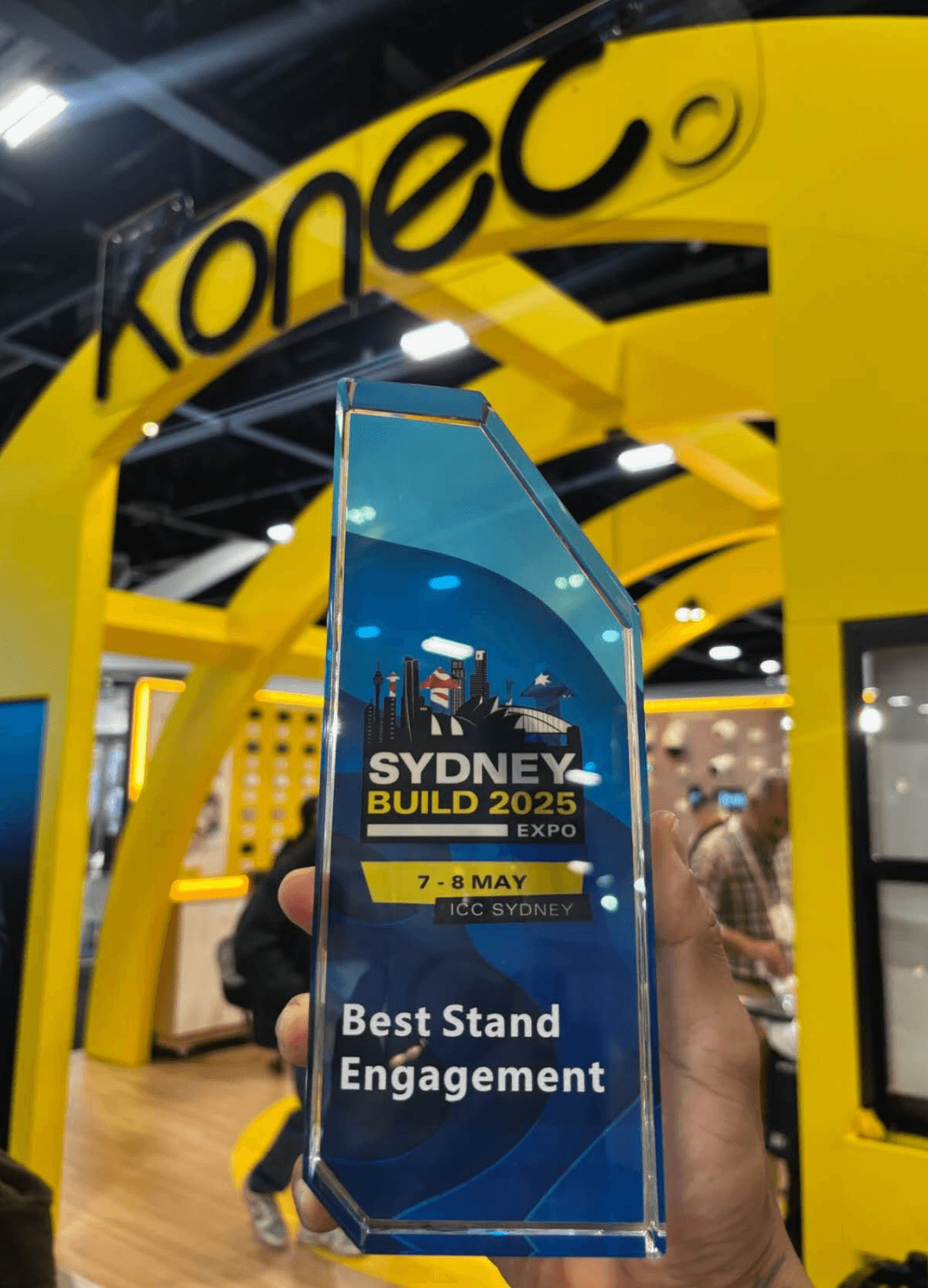

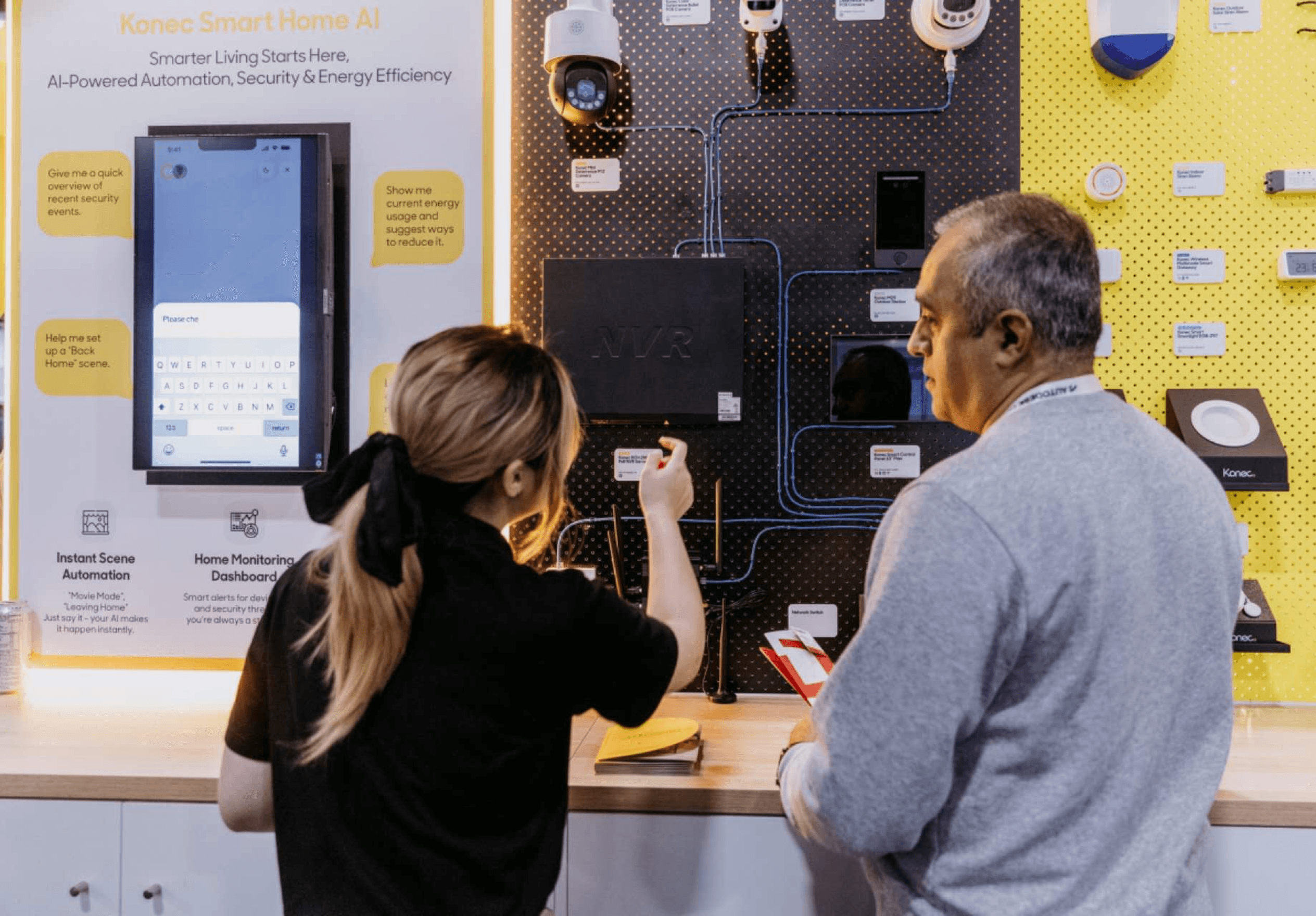

The AI Chat experience was showcased live at the Sydney Build Expo 2025, where I helped demonstrate its real-time capabilities to industry professionals. Our booth was awarded “Best Stand Engagement”, recognising the clarity and accessibility of our demo—especially the AI-driven scene and device interactions.

Real-World Visibility

07

[fig 1]

Me explaining the AI Chat experience to a visitor

AI panel setup

[fig 2]

Live visitor interaction with the AI screen

The AI panel interface in the full smart home wall

Booth award – Best Stand Engagement

[fig 3]

[fig 4]

[fig 5]